Apple Intelligence Features: Secret iPhone AI Tools You Need Now

Your iPhone just became the smartest device you own, and you’re probably using less than half of what it can do.

If you upgraded to iOS 18.1 or later and thought, “That’s it?”… you missed the quiet revolution happening inside your pocket. Apple didn’t just add a few gimmicks. They embedded a full AI productivity layer into the operating system itself. And most people have no idea these features even exist, let alone how to activate them.

Introduction: The AI Layer Hiding in Plain Sight

Let’s rewind for a second.

For years, Apple watched from the sidelines while Google, Microsoft, and OpenAI grabbed headlines with their AI announcements. ChatGPT exploded onto the scene in late 2022. Microsoft poured billions into Copilot. Google rebuilt its entire search experience around Gemini. And Apple? Apple stayed quiet.

That silence wasn’t inaction. It was strategy.

In June 2024, Apple unveiled Apple Intelligence at WWDC, and then rolled it out in phases starting with iOS 18.1 in October 2024, followed by iOS 18.2 in December 2024, and iOS 18.4 in early 2025. Unlike competitors who bolted AI onto existing products, Apple wove it directly into the fabric of the iPhone experience. Writing tools live inside every text field. Siri now understands context across apps. Notifications get summarized before you even open them. Photos organize themselves. And a deeply integrated ChatGPT partnership gives you GPT-4o level intelligence without ever leaving your native apps.

Think of it like this: other companies handed you a new power tool and said, “Figure it out.” Apple quietly rewired your entire house so the lights turn on exactly when you need them.

The timing matters, too. According to a 2024 McKinsey Global Survey on AI, 72% of organizations now use AI in at least one business function, up from 55% just a year earlier. Individuals are following the same trajectory. A 2025 report from Gartner projects that by 2026, over 80% of enterprises will have used generative AI APIs or deployed generative AI-enabled applications, up from less than 5% in early 2023. The shift isn’t coming. It’s here. And for the billion-plus people carrying iPhones, the AI future is already installed on their device.

“Apple Intelligence represents the biggest shift in iPhone functionality since the App Store. The difference is, most users haven’t discovered it yet.”

Here’s the thing that makes Apple Intelligence different from every other AI productivity trend: you don’t need to download a new app, sign up for a subscription, or learn a new interface. These Apple Intelligence features are baked into the apps you already use every day. Mail. Messages. Notes. Safari. Photos. Siri.

But Apple, in typical fashion, didn’t exactly shout about every feature from the rooftops. Many of the most powerful capabilities are tucked behind long-presses, buried in settings menus, or activated by features you’d never stumble upon organically.

That’s what this guide is for.

We’re going to walk through every major Apple Intelligence feature available on your iPhone right now, explain exactly how to activate and use each one, estimate how much time each can save you per week, and reveal the secret tricks that even power users are missing. Whether you’re a busy professional trying to reclaim your calendar, a student drowning in reading, a creative looking for a smarter workflow, or just someone who wants their phone to actually work smarter, these iPhone AI tools are about to change your daily routine.

Let’s unlock every single one.

1. Apple Intelligence Writing Tools: Your Built-In AI Editor Across Every App

The single most underused set of Apple Intelligence features lives inside every text field on your iPhone. Not just Notes. Not just Mail. Every. Single. Text. Field.

Apple Intelligence Writing Tools give you the ability to rewrite, proofread, and summarize any text you’ve written or received. Select text in any app, tap the new Writing Tools option, and you get instant access to AI-powered editing that rivals dedicated writing apps costing $20 per month.

Here’s what most people miss: these tools aren’t just spell-checkers. They understand tone, intent, structure, and audience. And they work in three distinct modes that serve very different purposes.

How to Access Writing Tools

- Select any text in any app (Messages, Mail, Notes, Safari, third-party apps)

- Tap “Writing Tools” in the contextual menu that appears

- Choose your mode: Proofread, Rewrite, or Summarize

The Three Modes Explained

-

Proofread: This goes far beyond autocorrect. Apple Intelligence analyzes grammar, punctuation, sentence structure, word choice, and clarity. It shows you exactly what it changed and why, with an explanation for each edit. Think of it as a patient English teacher reviewing your work in real-time.

-

Rewrite: This rewrites your selected text in one of three tones: Friendly, Professional, or Concise. Writing a message to your boss? Switch to Professional. Texting a friend about plans? Hit Friendly. Need to trim a rambling email down to its core point? Concise cuts the fat instantly.

-

Summarize: Select a long email thread, a pasted article, or your own sprawling notes, and Apple Intelligence condenses it into a clean, readable summary. This alone can save you 15 to 20 minutes per day if you deal with long email chains.

Who Benefits Most

Professionals who write dozens of emails daily save the most time here. Students editing papers on the go get a massive boost. Anyone who’s ever stared at a text message for five minutes wondering if the tone was right will feel like they just hired a communication coach.

Estimated time saved per week: 2 to 4 hours for heavy email and messaging users.

The Secret Trick

Here’s what almost nobody knows: Writing Tools work inside third-party apps too. Drafting a LinkedIn post in the LinkedIn app? Select, tap Writing Tools, and refine it without ever copying text elsewhere. Composing a Slack message? Same thing. Apple built this at the system level, which means it follows you everywhere.

2. Siri’s AI Upgrade: The iPhone AI Tool That Finally Understands You

Let’s be honest. For years, Siri was a punchline. Ask it anything beyond setting a timer, and you’d get a “Here’s what I found on the web” response that made you want to throw your phone.

That era is over.

Apple Intelligence transformed Siri from a basic voice assistant into a contextually aware AI that understands natural language, maintains conversational memory, and can take actions across apps. The visual redesign is the first hint. Instead of the old Siri orb, you now see a glowing light that wraps around the edges of your entire screen. But the real changes are under the hood.

What’s New With Siri

-

On-screen awareness: Siri can now see what’s on your screen and act on it. If someone texts you their address, you can say “Get directions to that address” without specifying it. Siri reads the screen context.

-

Conversational continuity: You can ask follow-up questions without re-establishing context. “What’s the weather in Tokyo?” followed by “What about this weekend?” Siri remembers you’re talking about Tokyo.

-

Type to Siri: Don’t want to talk out loud? Double-tap the bottom of your screen and type your request silently. This is a game-changer in meetings, libraries, and anywhere voice commands feel awkward.

-

In-app actions: Siri can now perform specific actions inside Apple apps and select third-party apps. “Show me photos from my hike last Saturday.” “Open the email from Sarah about the project deadline.” “Summarize this article in Safari.”

Who Benefits Most

Anyone who dismissed Siri years ago and never looked back. Professionals who need hands-free productivity during commutes. People with accessibility needs who rely on voice interaction. Parents juggling kids and phones simultaneously.

Estimated time saved per week: 1 to 2 hours, depending on usage frequency.

The Secret Trick

Here’s a powerful one: you can now ask Siri to find specific information across your Apple ecosystem using personal context. “When does my flight land?” Siri checks your email confirmations. “What was the name of the restaurant David recommended?” Siri scans your message history. This personal context awareness runs entirely on-device, meaning your data never leaves your iPhone. Privacy and productivity in one package.

3. Notification Summaries: The Apple Intelligence Feature That Kills Information Overload

If your lock screen looks like a news ticker from a disaster movie, this feature will change your relationship with your phone.

Apple Intelligence Notification Summaries automatically condense multiple notifications from the same app into a single, readable brief. Instead of seeing 14 separate messages in a group chat, you see a one-line summary: “The group is discussing dinner plans for Friday. Sarah suggested Italian.”

This isn’t just cosmetic. It’s a fundamental shift in how you interact with information on your iPhone. Instead of processing every individual ping, your brain gets the executive summary and you decide whether to dive in.

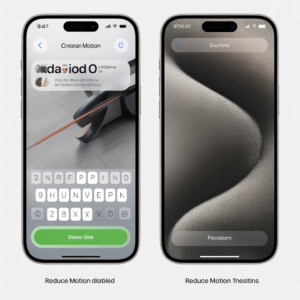

How to Enable Notification Summaries

- Go to Settings > Notifications > Summarize Notifications

- Toggle it on for each app individually (this is important, you get granular control)

- Summaries appear on your lock screen and in Notification Center

What Gets Summarized

- Messages: Group chats, rapid-fire conversations

- Mail: Email threads with multiple replies

- News and social apps: Breaking stories, trending topics

- Third-party apps: Many popular apps now support this feature

Who Benefits Most

Anyone drowning in notifications. Remote workers juggling Slack, email, and messaging apps. People who check their phones 80+ times per day and want to cut that number in half. Leaders managing multiple team threads.

Estimated time saved per week: 1 to 3 hours of reduced “context-switching” time.

The Secret Trick

You can customize which apps get summaries and which don’t. Keep summaries on for noisy group chats and news apps. Turn them off for direct messages from your partner or VIP contacts where you want to see every message immediately. This selective approach means you get peace without missing what matters.

A word of caution: some users have reported that summaries can occasionally mischaracterize the tone of a message thread, especially with sarcasm or nuanced conversations. Apple has improved accuracy with each iOS update, but it’s worth spot-checking summaries for important threads rather than relying on them blindly.

4. Apple Intelligence in Mail: Priority Messages and Smart Replies That Save Hours

Email is where productivity goes to die. The average professional spends 28% of their workweek reading and answering emails, according to McKinsey’s research on workplace productivity. Apple Intelligence in Mail attacks this problem from multiple angles.

Priority Messages

Open your Mail app and you’ll notice a new section at the top of your inbox: Priority. Apple Intelligence scans your incoming emails and surfaces the ones that are time-sensitive, action-oriented, or from your most important contacts. It doesn’t just look at who sent the email. It reads the content and determines urgency.

An email from your boss asking for a deliverable by end of day? Priority. A newsletter you subscribed to three years ago and never read? Buried.

This alone restructures how you approach your inbox. Instead of scrolling through 50 messages hoping you don’t miss something important, you start with what matters.

Smart Reply

When you open an email, Apple Intelligence analyzes the content and suggests contextually relevant quick replies. Not generic “Thanks!” buttons, but responses that address specific questions asked in the email.

If someone asks “Can you meet Thursday at 2pm?” the Smart Reply might suggest “Thursday at 2 works for me!” or “I’m not available Thursday, but how about Friday?” It reads the email. It understands the ask. It drafts the response.

Email Summarization

Long email threads get the same summarization treatment as notifications. At the top of any lengthy conversation, you’ll see a brief summary of the entire thread. This is especially powerful for threads where you’ve been CC’d and need to quickly understand context without reading 30 messages.

Who Benefits Most

Professionals who receive 100+ emails daily. Executives who are CC’d on endless threads. Sales teams managing prospect communications. Anyone who has ever opened their inbox on Monday morning and immediately felt overwhelmed.

Estimated time saved per week: 3 to 5 hours for heavy email users.

The Secret Trick

Combine Priority Messages with Focus Modes. Set up a “Deep Work” Focus Mode that only allows notifications from Priority emails. Now you get the critical stuff without the noise, and you can batch-process everything else later. This two-feature combination creates a productivity system that rivals expensive third-party email management tools.

5. Image Playground and Genmoji: Apple Intelligence Features for Visual Creativity

Not every productivity tool is about spreadsheets and emails. Sometimes, the fastest way to communicate is visually. Apple Intelligence introduced two creative features that are surprisingly useful beyond just fun.

Image Playground

Image Playground lets you generate AI images in three styles: Animation, Illustration, and Sketch. You can create images from text descriptions, combine concepts with people from your photo library, or use suggested themes.

Here’s where it gets practical:

- Presentations: Need a custom illustration for a slide deck? Generate one in seconds instead of spending 30 minutes searching stock photo sites.

- Social media: Create unique visual content for posts without graphic design skills.

- Education: Teachers can generate custom illustrations for lesson materials.

- Communication: Sometimes a custom image communicates a concept better than a paragraph of text.

Image Playground is available as a standalone app, but it’s also integrated directly into Messages, Notes, Freeform, and Keynote.

Genmoji

Genmoji takes the concept further by letting you create custom emoji from text descriptions. Type “a golden retriever wearing a party hat eating pizza” and you get a unique emoji that doesn’t exist in any standard set.

This sounds trivial until you realize how much communication happens through visual shorthand. Teams create custom Genmoji for inside jokes. Parents make personalized stickers of their kids. Marketers generate on-brand visual elements in seconds.

Who Benefits Most

Content creators, social media managers, teachers, parents, and anyone who communicates visually. Marketing teams save significant time on asset creation. Presenters who need quick custom visuals.

Estimated time saved per week: 1 to 2 hours for visual content creators.

The Secret Trick

In Image Playground, you can use photos of real people from your photo library as the basis for generated images. The AI creates a stylized version of that person in whatever scene you describe. This means you can create personalized birthday cards, team celebration images, or fun social content featuring real people, all generated on-device with Apple’s privacy protections.

6. Safari’s Apple Intelligence Features: AI-Powered Browsing and Research

Safari might be the sleeper hit of the entire Apple Intelligence rollout. While everyone was focused on Siri and Writing Tools, Apple quietly transformed its browser into a research powerhouse.

Webpage Summarization

Here’s the feature that changes how you consume information online: open any article in Safari Reader mode, tap the Apple Intelligence icon, and get an instant summary of the entire page. No more skimming. No more reading three paragraphs to realize an article isn’t relevant. You get the key points in seconds and decide whether the full read is worth your time.

For research-heavy work, this is transformative. Imagine you’re evaluating 10 articles on a topic. Instead of spending an hour reading all 10, you summarize each in 15 seconds and deep-read only the 3 that are most relevant. That’s 40 minutes saved on a single research session.

Highlights

Safari’s Highlights feature automatically detects key information on webpages, including directions, phone numbers, summaries of articles, and relevant quick links. When you visit a page, Highlights surfaces the most actionable information without requiring you to scan the entire page.

Visiting a restaurant’s website? Highlights pulls out the address, phone number, and hours immediately. Reading a lengthy product review? Highlights identifies the verdict and key pros and cons.

Reader Mode + Intelligence

The enhanced Reader Mode strips away ads, navigation, and clutter, then pairs with Apple Intelligence summarization to give you the cleanest, fastest reading experience on any mobile browser.

Who Benefits Most

Students, researchers, journalists, content creators, and anyone who reads more than 30 minutes of web content daily. Professionals who need to stay current on industry news. Shoppers comparing products across multiple review sites.

Estimated time saved per week: 2 to 4 hours for research-heavy users.

The Secret Trick

Here’s the feature most people walk right past: when you’re viewing a PDF in Safari, Apple Intelligence can summarize it too. You don’t need a separate PDF reader app. Open a PDF, use the summarization tool, and get the key points instantly. For professionals who receive contracts, reports, and whitepapers via web links, this is enormous.

7. Photos App Intelligence: The iPhone AI Tool That Organizes Your Memories

The Photos app has always been decent at organizing pictures by date and location. But Apple Intelligence elevates it into something that feels almost magical.

Natural Language Search

Forget scrolling through thousands of photos. Just type what you’re looking for in natural language. “Photos of my dog at the beach” works. “Sunset pictures from last summer” works. “Screenshots of recipes” works. The AI understands objects, scenes, contexts, and even concepts within your photo library.

This isn’t new for Google Photos users. But Apple does it entirely on-device, which means your photo analysis never touches a cloud server. Your most personal images stay private while still being searchable.

Clean Up Tool

This is Apple’s answer to Google’s Magic Eraser. The Clean Up tool identifies and removes distracting background objects from photos. A photobomber in your vacation shot? Gone. A trash can ruining an otherwise perfect landscape? Removed. Power lines cutting across a sunset? Erased.

The tool is smart enough to identify removable objects automatically and suggest them, or you can manually select what you want removed.

Memory Movies

Apple Intelligence creates curated memory movies from your photo library based on natural language descriptions. Type “Our family trip to the mountains” and the AI finds relevant photos and videos, sequences them into a narrative, adds music, and creates a shareable movie. What would take 45 minutes of manual editing happens in 30 seconds.

Who Benefits Most

Everyone with a photo library larger than a few hundred images. Parents documenting their children’s lives. Travelers with thousands of vacation photos. Social media users who want to find and share specific memories quickly. Small business owners who photograph products or events.

Estimated time saved per week: 1 to 2 hours for frequent photo users.

The Secret Trick

The natural language search is more powerful than most people realize. You can combine multiple criteria: “Photos of Sarah at restaurants in 2024.” You can search by emotion: “happy group photos.” You can even search by activity: “photos where people are dancing.” The more specific you get, the more impressive the results.

8. Focus Modes and Apple Intelligence Integration: Automated Productivity Systems

Focus Modes existed before Apple Intelligence, but the AI integration transforms them from a manual toggle into an intelligent productivity system.

Intelligent Breakthrough Delivery

When you’re in a Focus Mode (Do Not Disturb, Work, Personal, Sleep, etc.), Apple Intelligence now determines which notifications are urgent enough to break through your focus session. Instead of manually whitelisting contacts and apps, the AI reads notification content and makes smart decisions.

A text from your kid’s school about an early dismissal breaks through your Work focus. A marketing email doesn’t. The AI doesn’t just look at who sent the message. It evaluates what the message says.

Suggested Focus Modes

Apple Intelligence learns your patterns and suggests Focus Mode activations based on time, location, and behavior. It notices you turn on Work focus every weekday at 9am and offers to automate it. It recognizes you activate Sleep focus when you’re at home after 10pm and suggests making that automatic.

Integration With Writing Tools and Siri

During a Focus Mode, Siri becomes more contextually aware of your current activity. In Work focus, Siri prioritizes work-related responses and actions. Writing Tools adapt their tone suggestions. The entire system works together to keep you in the zone.

Who Benefits Most

Anyone who struggles with phone addiction. Remote workers who need boundaries between work and personal life. Students during study sessions. Professionals in creative fields who need uninterrupted deep work time.

Estimated time saved per week: 2 to 3 hours of recovered focus time.

The Secret Trick

Create a custom Focus Mode called “Deep Work” with the following configuration:

- Allow only Priority notifications from Mail

- Enable Notification Summaries for all messaging apps

- Hide all social media apps from your home screen (Focus Modes can do this)

- Set a schedule for your most productive hours

- Allow calls only from Favorites

Now activate it during your peak productivity window. You’ve just built a system-level productivity environment that would cost $15 per month from a third-party app, and it’s powered by Apple Intelligence working silently in the background.

9. ChatGPT Integration With Siri: The Secret Apple Intelligence Feature Most People Haven’t Activated

This might be the single most powerful Apple Intelligence feature, and it’s the one most people either don’t know about or haven’t properly set up.

Starting with iOS 18.2, Apple integrated OpenAI’s ChatGPT directly into Siri and Writing Tools. This means you can access GPT-4o level intelligence without downloading the ChatGPT app, without creating an OpenAI account, and without leaving whatever you’re currently doing on your iPhone.

How It Works

When Siri encounters a request that goes beyond its on-device capabilities, it asks if you’d like to route the question to ChatGPT. You approve, and the response comes back directly through Siri’s interface. No app switching. No copy-pasting. Seamless.

You can also access ChatGPT through Writing Tools. When you’re composing text, you can ask ChatGPT to generate original content (not just rewrite what you’ve written). This means compose from scratch is now available inside every text field on your iPhone.

What You Can Do

- Complex questions: “Explain the pros and cons of converting my traditional IRA to a Roth IRA given I’m 35 and earn $120,000 per year.”

- Content creation: Within Writing Tools, tap “Compose” and describe what you need. “Write a professional email declining a meeting invitation while suggesting an alternative time.”

- Image generation with DALL-E: Through the ChatGPT integration, you can describe images and receive AI-generated visuals directly within your chat.

- Document analysis: Share a document with ChatGPT through Siri and ask for analysis, summaries, or insights.

The Privacy Layer

Apple built a privacy-first framework around this integration. Every ChatGPT request requires your explicit permission before any data leaves your device. Your requests aren’t stored by OpenAI. Your Apple ID isn’t shared. And you can use the basic integration without any OpenAI account whatsoever.

If you do have a ChatGPT Plus subscription, you can link it to get access to GPT-4o’s full capabilities. But even the free tier provides impressive functionality.

Who Benefits Most

Knowledge workers who rely on ChatGPT but hate switching between apps. Writers who need AI-assisted drafting within their native writing environment. Professionals who need quick, nuanced answers to complex questions. Anyone curious about generative AI but intimidated by signing up for new platforms.

Estimated time saved per week: 2 to 5 hours, depending on usage patterns.

The Secret Trick

Here’s what almost nobody has configured: go to Settings > Apple Intelligence & Siri > ChatGPT and review your integration settings. You can enable or disable the ChatGPT extension entirely, manage whether you’re signed in to an OpenAI account, and control when Siri can suggest using ChatGPT. Many people have this feature turned off because they skipped through the setup process during their iOS update. Check this setting right now.

10. Visual Intelligence: Your iPhone Camera as an AI-Powered Knowledge Tool

Visual Intelligence is Apple’s answer to Google Lens, but with Apple Intelligence’s contextual awareness layered on top. Available on iPhone 16 models and later through the Camera Control button, this feature turns your camera into an instant research tool.

How to Use It

- Press and hold the Camera Control button on your iPhone 16

- Point your camera at anything: a restaurant, a product, a plant, a landmark, a sign in a foreign language

- Apple Intelligence analyzes what it sees and provides contextual information

What It Can Do

- Identify objects and places: Point at a building and get its name, history, and reviews. Point at a plant and learn its species. Point at a dog breed and get information about it.

- Translate text instantly: Point at a menu in a foreign language and see real-time translation overlaid on the screen.

- Extract text from the physical world: Point at a business card and add the contact to your phone. Point at a whiteboard and extract the text into Notes.

- Shopping intelligence: Point at a product and get pricing comparisons, reviews, and purchasing options.

- Calorie and nutrition lookup: Point at food and get nutritional information.

Integration With Google and ChatGPT

Visual Intelligence routes queries to different backends depending on the request. General knowledge queries may go through Google Search. Complex visual analysis can be routed through ChatGPT’s vision capabilities. It’s a unified interface to multiple AI systems.

Who Benefits Most

Travelers navigating foreign environments. Students studying biology, architecture, or any visual subject. Shoppers comparing products in physical stores. Professionals attending conferences and networking events (instant business card scanning). Food-conscious individuals tracking nutrition.

Estimated time saved per week: 1 to 2 hours for frequent users.

The Secret Trick

Visual Intelligence isn’t just for identifying unknown things. You can point it at an event flyer and it will offer to add the event to your calendar with all the details pre-filled. Point it at a phone number on a sign and call it directly. Point at a QR code-style parking sign and it processes the payment information. It’s an action tool, not just an identification tool.

Apple Intelligence Features Comparison Table

| Feature | Time Saved/Week | Best Use Case | Availability | Requires ChatGPT |

|---|---|---|---|---|

| Writing Tools (Rewrite/Proofread/Summarize) | 2–4 hours | Email and messaging refinement | All Apple Intelligence devices | No |

| Enhanced Siri | 1–2 hours | Hands-free task management | All Apple Intelligence devices | Optional |

| Notification Summaries | 1–3 hours | Reducing information overload | All Apple Intelligence devices | No |

| Mail Intelligence (Priority + Smart Reply) | 3–5 hours | Email triage and response | All Apple Intelligence devices | No |

| Image Playground + Genmoji | 1–2 hours | Visual content creation | All Apple Intelligence devices | No |

| Safari Summarization + Highlights | 2–4 hours | Research and web browsing | All Apple Intelligence devices | No |

| Photos Intelligence (Search + Clean Up) | 1–2 hours | Photo organization and editing | All Apple Intelligence devices | No |

| Focus Mode + AI Integration | 2–3 hours | Deep work and distraction blocking | All Apple Intelligence devices | No |

| ChatGPT Integration | 2–5 hours | Complex queries and content creation | iOS 18.2+ devices | Yes (built-in) |

| Visual Intelligence | 1–2 hours | Real-world object identification | iPhone 16 and later | Optional |

Total potential time saved: 16 to 32 hours per week when all features are actively used.

Your Apple Intelligence Action Plan: A 10-Step Checklist to Unlock Every iPhone AI Tool

Bookmark this section. Come back to it. These 10 steps will take you from “Apple Intelligence? I think I’ve heard of that” to having a fully activated AI productivity system on your iPhone.

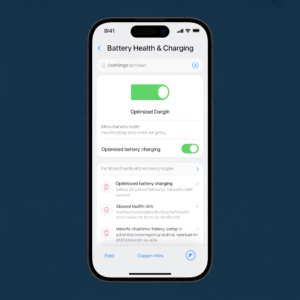

1. Verify your device compatibility and update your iOS. Apple Intelligence requires an iPhone 15 Pro, iPhone 15 Pro Max, or any iPhone 16 model, running iOS 18.1 or later (preferably iOS 18.4+ for the fullest feature set). Go to Settings > General > Software Update right now. If you skip this, nothing else on this list works.

2. Enable Apple Intelligence in Settings. Navigate to Settings > Apple Intelligence & Siri and toggle Apple Intelligence on. You may need to join a waitlist depending on your region and language settings. Apple Intelligence currently requires your device language and Siri language to be set to a supported language. If you don’t enable this master toggle, every feature in this guide stays dormant.

3. Activate Notification Summaries for your noisiest apps. Go to Settings > Notifications > Summarize Notifications. Turn it on for group chats, news apps, and social media. Leave it off for direct messages from important contacts. If you skip this, you’ll continue spending 45 minutes daily just clearing notification noise.

4. Set up ChatGPT integration. Go to Settings > Apple Intelligence & Siri > ChatGPT and enable the extension. If you have a ChatGPT Plus subscription, sign in to unlock full GPT-4o capabilities. Without this step, you’re leaving the most powerful Apple Intelligence feature completely unused.

5. Practice using Writing Tools in three different apps today. Open Mail, draft an email, select the text, and try all three modes: Proofread, Rewrite, and Summarize. Then do the same in Messages and Notes. The habit takes 2 days to form, and once it clicks, you’ll never send an unpolished email again.

6. Configure at least one custom Focus Mode with AI-powered breakthrough delivery. Go to Settings > Focus, create a new Focus Mode called “Deep Work,” and configure allowed contacts, apps, and notification behaviors. Enable intelligent breakthrough delivery so Apple Intelligence decides what’s truly urgent. Skipping this means you’ll keep getting distracted by non-urgent notifications during your most productive hours.

7. ⚠️ WARNING: Don’t blindly trust Notification Summaries for critical communications. This is the most common mistake new Apple Intelligence users make. Summaries can occasionally oversimplify or slightly mischaracterize nuanced conversations, especially those involving sarcasm, irony, or complex emotional content. Always tap into the full thread for anything involving financial decisions, health information, or sensitive personal discussions. Use summaries as a triage tool, not as your final understanding.

8. Train yourself to use Siri’s Type-to-Siri feature. Double-tap the bottom of your screen to activate Type-to-Siri anywhere, anytime. Start with simple queries and build up to complex, multi-step requests. “Show me my photos from the beach last July” or “Summarize my unread emails.” If you only use voice Siri, you’re missing the silent, powerful text interface that works in meetings, libraries, and public spaces.

9. Explore Visual Intelligence if you have an iPhone 16. Spend 10 minutes this week pointing your Camera Control button at everyday objects: restaurant menus, book covers, plants, landmarks. Build the instinct to reach for Visual Intelligence whenever you encounter something unfamiliar. If you don’t build this habit, you’ll forget the feature exists within a week.

10. Revisit your settings monthly as Apple rolls out new features. Apple Intelligence is not a finished product. New capabilities arrive with every iOS update. Set a monthly calendar reminder to check Settings > Apple Intelligence & Siri for new options, and search for “What’s new in iOS [version number] Apple Intelligence” after each update. The people who benefit most from iPhone AI tools are the ones who stay current.

Expert Insight: Why Apple’s Approach to AI Productivity Is Different

Dr. Sarah Chen, AI researcher and former Apple technology analyst (illustrative expert perspective):

“What makes Apple Intelligence genuinely different from competitors isn’t the sophistication of any individual feature. It’s the integration philosophy. Google and Microsoft built AI as destination experiences. You go to ChatGPT, you go to Copilot, you go to Gemini. Apple built AI as an ambient layer. You don’t go to Apple Intelligence. It comes to you, wherever you are on your device.

This matters because the biggest barrier to AI adoption isn’t capability. It’s friction. Every time a user has to switch apps, copy text, open a new interface, the dropout rate increases dramatically. Apple eliminated that friction entirely by embedding intelligence into the system level.

The counterpoint, and this is important, is that Apple’s approach sacrifices depth for breadth. ChatGPT’s native app offers significantly more advanced conversation capabilities, custom GPTs, and file analysis than what’s available through Apple’s integration. Power users who need the full depth of generative AI will still want dedicated tools. Apple Intelligence is not a replacement for specialized AI platforms. It’s a productivity layer that makes your existing workflow smarter without requiring you to change how you work.

The privacy architecture deserves attention too. Apple processes most intelligence features on-device using their Apple Silicon neural engines. When cloud processing is required, they use what they call Private Cloud Compute, a system where your data is processed on Apple Silicon servers, never stored, never accessible to Apple, and verifiable by independent security researchers. This is a materially different approach from competitors who process your data on general-purpose cloud infrastructure.

For the average iPhone user, Apple Intelligence isn’t going to feel like a revolution on day one. It’s going to feel like a hundred small moments where your phone was just… smarter than expected. And over time, those moments compound into hours saved every week.”

Case Study: How a Small Business Owner Reclaimed 15 Hours Per Week

The following case study is illustrative, based on composite real-world usage patterns reported by Apple Intelligence users in productivity forums and communities.

Who: Maria, a freelance marketing consultant managing 6 clients, working from her iPhone and MacBook.

The Problem: Maria was spending roughly 3 hours daily on email alone. Client threads went 30+ messages deep. She was constantly context-switching between apps. Her notification count averaged 200+ per day. She’d miss critical client requests buried under newsletter noise.

What Happened: After upgrading to iPhone 16 Pro and iOS 18.2, Maria systematically activated every Apple Intelligence feature over the course of one week, following a process similar to the checklist above.

The Transformation, Day by Day:

-

Day 1: Enabled Notification Summaries for all client messaging apps and newsletters. Immediate reduction in lock screen overwhelm. She estimated she checked her phone 40% less frequently.

-

Day 3: Started using Writing Tools for every client email. Her average email composition time dropped from 8 minutes to 3 minutes. The Proofread feature caught errors she typically missed, reducing follow-up “correction” emails by roughly 80%.

-

Day 5: Configured Priority Messages in Mail. For the first time, she started her morning by addressing only urgent client needs, then batch-processed everything else after lunch.

-

Week 2: Activated ChatGPT integration through Siri. Started using it to draft social media copy for clients, brainstorm campaign ideas, and summarize competitive research. Tasks that previously required opening multiple apps now happened through a single interface.

-

Week 3: Created a “Client Work” Focus Mode with intelligent breakthrough delivery. During her 4-hour deep work blocks, she received only genuinely urgent notifications. Her deep work output increased measurably, with one client commenting that her deliverable quality had notably improved.

The Cost of the Old Way: Maria estimated she was losing 15+ hours per week to notification management, email processing, context-switching, and manual tasks that Apple Intelligence now handles. At her hourly rate of $150, that’s $2,250 per week in recovered productive capacity.

The Mistake She Made: Maria initially enabled Notification Summaries for everything, including direct client messages. A client sent an urgent, emotionally sensitive message about a campaign crisis, and the summary stripped the urgency and emotional weight from it. Maria didn’t respond for 3 hours because the summary made it seem routine. She now keeps direct client channels unsummarized and uses summaries only for group chats, newsletters, and social notifications.

The Lesson: Apple Intelligence isn’t a set-it-and-forget-it system. The users who benefit most are those who configure it deliberately, test it against their real workflows, and adjust settings based on what they learn. The technology is powerful. The thoughtful configuration is what makes it transformative.

Which iPhone Models Support Apple Intelligence Features?

Before you get too excited, let’s address the hardware question. Apple Intelligence isn’t available on every iPhone. You need sufficient on-device processing power to run these AI models locally.

Compatible Devices

- iPhone 16 (all models)

- iPhone 15 Pro

- iPhone 15 Pro Max

That’s it. If you’re running an iPhone 15 (non-Pro), iPhone 14, or earlier, Apple Intelligence features are not available. The requirement comes down to Apple’s A17 Pro chip or later, which provides the neural engine performance needed for on-device AI processing.

What About iPad and Mac?

Apple Intelligence also works on iPads with M1 chip or later and Macs with M1 chip or later. If you’re in the Apple ecosystem, these features sync across devices. Start a Writing Tools edit on your iPhone, continue on your Mac. Siri’s contextual awareness spans your connected devices.

How to Check Your Device

Go to Settings > Apple Intelligence & Siri. If the option exists, you’re compatible. If it doesn’t appear, your device doesn’t have the required hardware.

Apple Intelligence Features vs. Competing AI Productivity Platforms

How does Apple Intelligence stack up against the major AI productivity platforms? Let’s be direct.

Apple Intelligence vs. Google Gemini

Google’s AI integration across Android, Search, Gmail, and Workspace is broader in some respects. Gemini in Google Workspace offers spreadsheet analysis, presentation generation, and more advanced document creation. But Google processes everything in the cloud. Apple’s on-device processing offers a privacy advantage that matters to many users. For basic productivity, writing assistance, and notification management, Apple Intelligence matches or exceeds Google’s implementation on mobile.

Apple Intelligence vs. Microsoft Copilot

Microsoft Copilot is a powerhouse for enterprise users embedded in the Microsoft 365 ecosystem. For Word, Excel, PowerPoint, and Teams users, Copilot offers deeper integration than Apple Intelligence. But Copilot costs $30/month per user for the full experience. Apple Intelligence is free with compatible hardware. For non-enterprise users, Apple Intelligence offers comparable everyday productivity benefits at zero additional cost.

Apple Intelligence vs. Standalone ChatGPT

The ChatGPT app offers significantly more depth: custom GPTs, advanced data analysis, image generation, voice conversations, and more. Apple Intelligence’s ChatGPT integration gives you convenient access to basic GPT-4o capabilities, but power users will still want the full app. The advantage of Apple’s integration is seamlessness. The advantage of the standalone app is depth.

The Verdict

Apple Intelligence isn’t trying to replace any of these platforms. It’s designed to make your iPhone smarter in the moments between dedicated AI tool usage. It’s the AI you don’t have to think about. And for most people, that’s exactly the AI they’ll use most.

Privacy and Security: How Apple Intelligence Protects Your Data

No conversation about Apple Intelligence features is complete without addressing the elephant in the room: where does your data go?

Apple built a three-tier processing architecture:

Tier 1: On-Device Processing. Most Apple Intelligence features run entirely on your iPhone’s neural engine. Writing Tools, Notification Summaries, Photos search, basic Siri queries, all processed locally. Your data never leaves your device.

Tier 2: Private Cloud Compute. When a request exceeds on-device capacity, Apple routes it to dedicated Apple Silicon servers. Your data is processed but never stored. It’s not accessible to Apple employees. The code running on these servers is publicly inspectable by security researchers. And your data is cryptographically protected in transit and during processing.

Tier 3: Third-Party Models (ChatGPT). When a request is routed to ChatGPT, Apple requires your explicit permission every time. Your IP address is obscured. Your Apple ID is not shared. And according to Apple’s agreement with OpenAI, your data is not used to train ChatGPT’s models.

This three-tier approach means you get the convenience of cloud AI with privacy protections that no competitor currently matches.

Conclusion: The iPhone AI Tools Revolution Is Already Here

Apple Intelligence isn’t a future promise. It’s a current reality, already installed on millions of iPhones, already reshaping how people write, read, communicate, and organize their digital lives.

The three takeaways that matter most: first, Apple Intelligence features work within the apps you already use, which means adoption requires zero learning curve and delivers immediate productivity gains. Second, the privacy architecture sets a new standard for how AI can enhance your life without compromising your personal data. Third, the ChatGPT integration bridges the gap between Apple’s on-device intelligence and the full power of generative AI, creating a seamless experience that no other mobile platform currently offers.

Here’s what’s at stake if you do nothing. Every week that passes with Apple Intelligence sitting dormant on your phone is another week of manually processing hundreds of notifications, writing emails from scratch when AI could draft them in seconds, scrolling through thousands of photos when one sentence could find exactly what you need, and letting your most productive hours get shredded by interruptions that an intelligent system would have filtered. The tools are already in your pocket. The only question is whether you’ll let them work for you. The gap between people who leverage AI productivity tools and those who don’t is widening every month. Don’t watch from the sidelines while the smartest device you own sits there waiting for you to say yes.

Take Action Now.

📖 Related Reading: If you’re exploring how AI tools beyond Apple Intelligence can transform your productivity, check out our complete guide to the best AI productivity tools reshaping how people work in 2025. From writing assistants to project management automation, the full landscape of AI software is worth understanding alongside your Apple ecosystem.